Introduction to collapse

Advanced and Fast Data Transformation in R

Sebastian Krantz

2021-06-27

Source:vignettes/collapse_intro.Rmd

collapse_intro.Rmdcollapse is a C/C++ based package for data transformation and statistical computing in R. It’s aims are:

- To facilitate complex data transformation, exploration and computing tasks in R.

- To help make R code fast, flexible, parsimonious and programmer friendly.

This vignette demonstrates these two points and introduces all main features of the package in a structured way. The chapters are pretty self-contained, however the first chapters introduce the data and faster data manipulation functions which are used throughout the rest of this vignette.

Notes:

Apart from this vignette, collapse comes with a built-in structured documentation available under

help("collapse-documentation")after installing the package, andhelp("collapse-package")provides a compact set of examples for quick-start. A cheat sheet is available at Rstudio.The two other vignettes focus on the integration of collapse with dplyr workflows (recommended for dplyr / tidyverse users), and on the integration of collapse with the plm package (+ some advanced programming with panel data).

Documentation and vignettes can also be viewed online.

Why collapse?

collapse is a high-performance package that extends and enhances the data-manipulation capabilities of R and existing popular packages (such as dplyr, data.table, and matrix packages). It’s main focus is on grouped and weighted statistical programming, complex aggregations and transformations, time series and panel data operations, and programming with lists of data objects. The lead author is an applied economist and created the package mainly to facilitate advanced computations on varied and complex data, in particular surveys, (multivariate) time series, multilevel / panel data, and lists / model objects.

A secondary aspect to applied work is that data is often imported into R from richer data structures (such as STATA, SPSS or SAS files imported with haven). This called for an intelligent suite of data manipulation functions that can both utilize aspects of the richer data structure (such as variable labels), and preserve the data structure / attributes in computations. Sometimes specialized classes like xts, pdata.frame and grouped_df can also become very useful to manipulate certain types of data. Thus collapse was built to explicitly supports these classes, while preserving most other classes / data structures in R.

Another objective was to radically improve the speed of R code by extensively relying on efficient algorithms in C/C++ and the faster components of base R. collapse ranks among the fastest R packages, and performs many grouped and/or weighted computations noticeably faster than dplyr or data.table.

A final development objective was to channel this performance through a stable and well conceived user API providing extensive and optimized programming capabilities (in standard evaluation) while also facilitating quick use and easy integration with existing data manipulation frameworks (in particular dplyr / tidyverse and data.table, both relying on non-standard evaluation).

1. Data and Summary Tools

We begin by introducing some powerful summary tools along with the 2

panel datasets collapse provides which are used throughout this

vignette. If you are just interested in programming you can skip this

section. Apart from the 2 datasets that come with collapse

(wlddev and GGDC10S), this vignette uses a few

well known datasets from base R: mtcars, iris,

airquality, and the time series Airpassengers

and EuStockMarkets.

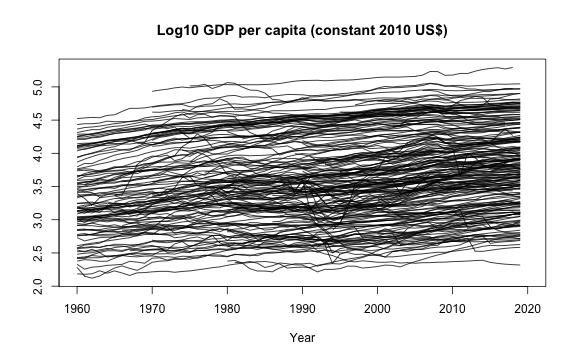

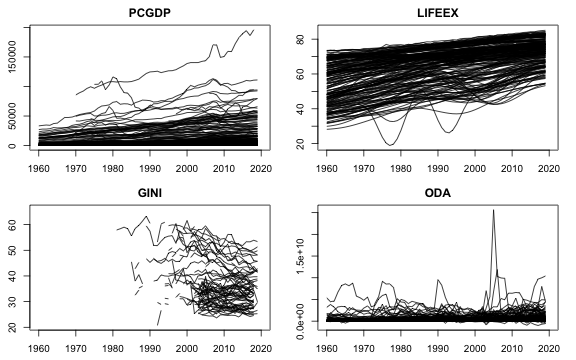

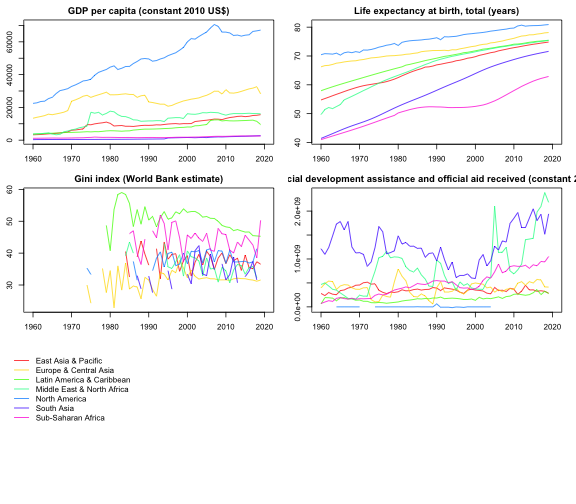

1.1 wlddev - World Bank Development Data

This dataset contains 5 key World Bank Development Indicators covering 216 countries for up to 61 years (1960-2020). It is a balanced balanced panel with 216 \times 61 = 13176 observations. –>

library(collapse)

head(wlddev)

# country iso3c date year decade region income OECD PCGDP LIFEEX GINI ODA

# 1 Afghanistan AFG 1961-01-01 1960 1960 South Asia Low income FALSE NA 32.446 NA 116769997

# 2 Afghanistan AFG 1962-01-01 1961 1960 South Asia Low income FALSE NA 32.962 NA 232080002

# 3 Afghanistan AFG 1963-01-01 1962 1960 South Asia Low income FALSE NA 33.471 NA 112839996

# 4 Afghanistan AFG 1964-01-01 1963 1960 South Asia Low income FALSE NA 33.971 NA 237720001

# 5 Afghanistan AFG 1965-01-01 1964 1960 South Asia Low income FALSE NA 34.463 NA 295920013

# 6 Afghanistan AFG 1966-01-01 1965 1960 South Asia Low income FALSE NA 34.948 NA 341839996

# POP

# 1 8996973

# 2 9169410

# 3 9351441

# 4 9543205

# 5 9744781

# 6 9956320

# The variables have "label" attributes. Use vlabels() to get and set labels

namlab(wlddev, class = TRUE)

# Variable Class

# 1 country character

# 2 iso3c factor

# 3 date Date

# 4 year integer

# 5 decade integer

# 6 region factor

# 7 income factor

# 8 OECD logical

# 9 PCGDP numeric

# 10 LIFEEX numeric

# 11 GINI numeric

# 12 ODA numeric

# 13 POP numeric

# Label

# 1 Country Name

# 2 Country Code

# 3 Date Recorded (Fictitious)

# 4 Year

# 5 Decade

# 6 Region

# 7 Income Level

# 8 Is OECD Member Country?

# 9 GDP per capita (constant 2010 US$)

# 10 Life expectancy at birth, total (years)

# 11 Gini index (World Bank estimate)

# 12 Net official development assistance and official aid received (constant 2018 US$)

# 13 Population, totalOf the categorical identifiers, the date variable was artificially

generated to have an example dataset that contains all common data types

frequently encountered in R. A detailed statistical description of this

data is computed by descr:

# A fast and detailed statistical description

descr(wlddev)

# Dataset: wlddev, 13 Variables, N = 13176

# ----------------------------------------------------------------------------------------------------

# country (character): Country Name

# Statistics

# N Ndist

# 13176 216

# Table

# Freq Perc

# Afghanistan 61 0.46

# Albania 61 0.46

# Algeria 61 0.46

# American Samoa 61 0.46

# Andorra 61 0.46

# Angola 61 0.46

# Antigua and Barbuda 61 0.46

# Argentina 61 0.46

# Armenia 61 0.46

# Aruba 61 0.46

# Australia 61 0.46

# Austria 61 0.46

# Azerbaijan 61 0.46

# Bahamas, The 61 0.46

# ... 202 Others 12322 93.52

#

# Summary of Table Frequencies

# Min. 1st Qu. Median Mean 3rd Qu. Max.

# 61 61 61 61 61 61

# ----------------------------------------------------------------------------------------------------

# iso3c (factor): Country Code

# Statistics

# N Ndist

# 13176 216

# Table

# Freq Perc

# ABW 61 0.46

# AFG 61 0.46

# AGO 61 0.46

# ALB 61 0.46

# AND 61 0.46

# ARE 61 0.46

# ARG 61 0.46

# ARM 61 0.46

# ASM 61 0.46

# ATG 61 0.46

# AUS 61 0.46

# AUT 61 0.46

# AZE 61 0.46

# BDI 61 0.46

# ... 202 Others 12322 93.52

#

# Summary of Table Frequencies

# Min. 1st Qu. Median Mean 3rd Qu. Max.

# 61 61 61 61 61 61

# ----------------------------------------------------------------------------------------------------

# date (Date): Date Recorded (Fictitious)

# Statistics

# N Ndist Min Max

# 13176 61 1961-01-01 2021-01-01

# ----------------------------------------------------------------------------------------------------

# year (integer): Year

# Statistics

# N Ndist Mean SD Min Max Skew Kurt

# 13176 61 1990 17.61 1960 2020 -0 1.8

# Quantiles

# 1% 5% 10% 25% 50% 75% 90% 95% 99%

# 1960 1963 1966 1975 1990 2005 2014 2017 2020

# ----------------------------------------------------------------------------------------------------

# decade (integer): Decade

# Statistics

# N Ndist Mean SD Min Max Skew Kurt

# 13176 7 1985.57 17.51 1960 2020 0.03 1.79

# Quantiles

# 1% 5% 10% 25% 50% 75% 90% 95% 99%

# 1960 1960 1960 1970 1990 2000 2010 2010 2020

# ----------------------------------------------------------------------------------------------------

# region (factor): Region

# Statistics

# N Ndist

# 13176 7

# Table

# Freq Perc

# Europe & Central Asia 3538 26.85

# Sub-Saharan Africa 2928 22.22

# Latin America & Caribbean 2562 19.44

# East Asia & Pacific 2196 16.67

# Middle East & North Africa 1281 9.72

# South Asia 488 3.70

# North America 183 1.39

# ----------------------------------------------------------------------------------------------------

# income (factor): Income Level

# Statistics

# N Ndist

# 13176 4

# Table

# Freq Perc

# High income 4819 36.57

# Upper middle income 3660 27.78

# Lower middle income 2867 21.76

# Low income 1830 13.89

# ----------------------------------------------------------------------------------------------------

# OECD (logical): Is OECD Member Country?

# Statistics

# N Ndist

# 13176 2

# Table

# Freq Perc

# FALSE 10980 83.33

# TRUE 2196 16.67

# ----------------------------------------------------------------------------------------------------

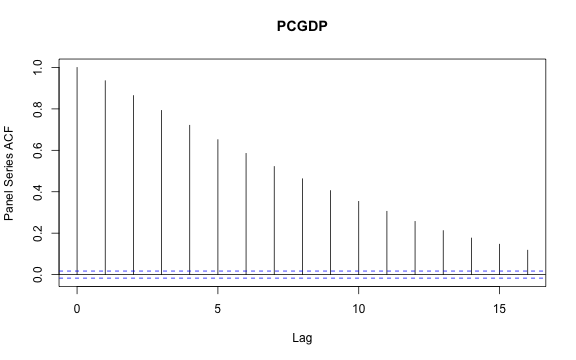

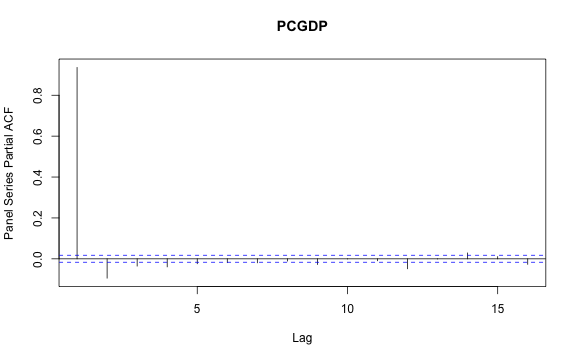

# PCGDP (numeric): GDP per capita (constant 2010 US$)

# Statistics (28.13% NAs)

# N Ndist Mean SD Min Max Skew Kurt

# 9470 9470 12048.78 19077.64 132.08 196061.42 3.13 17.12

# Quantiles

# 1% 5% 10% 25% 50% 75% 90% 95% 99%

# 227.71 399.62 555.55 1303.19 3767.16 14787.03 35646.02 48507.84 92340.28

# ----------------------------------------------------------------------------------------------------

# LIFEEX (numeric): Life expectancy at birth, total (years)

# Statistics (11.43% NAs)

# N Ndist Mean SD Min Max Skew Kurt

# 11670 10548 64.3 11.48 18.91 85.42 -0.67 2.67

# Quantiles

# 1% 5% 10% 25% 50% 75% 90% 95% 99%

# 35.83 42.77 46.83 56.36 67.44 72.95 77.08 79.34 82.36

# ----------------------------------------------------------------------------------------------------

# GINI (numeric): Gini index (World Bank estimate)

# Statistics (86.76% NAs)

# N Ndist Mean SD Min Max Skew Kurt

# 1744 368 38.53 9.2 20.7 65.8 0.6 2.53

# Quantiles

# 1% 5% 10% 25% 50% 75% 90% 95% 99%

# 24.6 26.3 27.6 31.5 36.4 45 52.6 55.98 60.5

# ----------------------------------------------------------------------------------------------------

# ODA (numeric): Net official development assistance and official aid received (constant 2018 US$)

# Statistics (34.67% NAs)

# N Ndist Mean SD Min Max Skew Kurt

# 8608 7832 454'720131 868'712654 -997'679993 2.56715605e+10 6.98 114.89

# Quantiles

# 1% 5% 10% 25% 50% 75% 90%

# -12'593999.7 1'363500.01 8'347000.31 44'887499.8 165'970001 495'042503 1.18400697e+09

# 95% 99%

# 1.93281696e+09 3.73380782e+09

# ----------------------------------------------------------------------------------------------------

# POP (numeric): Population, total

# Statistics (1.95% NAs)

# N Ndist Mean SD Min Max Skew Kurt

# 12919 12877 24'245971.6 102'120674 2833 1.39771500e+09 9.75 108.91

# Quantiles

# 1% 5% 10% 25% 50% 75% 90% 95% 99%

# 8698.84 31083.3 62268.4 443791 4'072517 12'816178 46'637331.4 81'177252.5 308'862641

# ----------------------------------------------------------------------------------------------------The output of descr can be converted into a tidy data

frame using:

head(as.data.frame(descr(wlddev)))

# Variable Class Label N Ndist Min Max Mean SD

# 1 country character Country Name 13176 216 NA NA NA NA

# 2 iso3c factor Country Code 13176 216 NA NA NA NA

# 3 date Date Date Recorded (Fictitious) 13176 61 -3287 18628 NA NA

# 4 year integer Year 13176 61 1960 2020 1990.000 17.60749

# 5 decade integer Decade 13176 7 1960 2020 1985.574 17.51175

# 6 region factor Region 13176 7 NA NA NA NA

# Skew Kurt 1% 5% 10% 25% 50% 75% 90% 95% 99%

# 1 NA NA NA NA NA NA NA NA NA NA NA

# 2 NA NA NA NA NA NA NA NA NA NA NA

# 3 NA NA NA NA NA NA NA NA NA NA NA

# 4 -5.812381e-16 1.799355 1960 1963 1966 1975 1990 2005 2014 2017 2020

# 5 3.256512e-02 1.791726 1960 1960 1960 1970 1990 2000 2010 2010 2020

# 6 NA NA NA NA NA NA NA NA NA NA NANote that descr does not require data to be labeled.

Since wlddev is a panel data set tracking countries over

time, we might be interested in checking which variables are

time-varying, with the function varying:

varying(wlddev, wlddev$iso3c)

# country iso3c date year decade region income OECD PCGDP LIFEEX GINI ODA

# FALSE FALSE TRUE TRUE TRUE FALSE FALSE FALSE TRUE TRUE TRUE TRUE

# POP

# TRUEvarying tells us that all 5 variables

PCGDP, LIFEEX, GINI,

ODA and POP vary over time. However the

OECD variable does not, so this data does not track when

countries entered the OECD. We can also have a more detailed look

letting varying check the variation in each country:

head(varying(wlddev, wlddev$iso3c, any_group = FALSE))

# country iso3c date year decade region income OECD PCGDP LIFEEX GINI ODA POP

# ABW FALSE FALSE TRUE TRUE TRUE FALSE FALSE FALSE TRUE TRUE NA TRUE TRUE

# AFG FALSE FALSE TRUE TRUE TRUE FALSE FALSE FALSE TRUE TRUE NA TRUE TRUE

# AGO FALSE FALSE TRUE TRUE TRUE FALSE FALSE FALSE TRUE TRUE TRUE TRUE TRUE

# ALB FALSE FALSE TRUE TRUE TRUE FALSE FALSE FALSE TRUE TRUE TRUE TRUE TRUE

# AND FALSE FALSE TRUE TRUE TRUE FALSE FALSE FALSE TRUE NA NA NA TRUE

# ARE FALSE FALSE TRUE TRUE TRUE FALSE FALSE FALSE TRUE TRUE TRUE TRUE TRUENA indicates that there are no data for this country. In

general data is varying if it has two or more distinct non-missing

values. We could also take a closer look at observation counts and

distinct values using:

head(fnobs(wlddev, wlddev$iso3c))

# country iso3c date year decade region income OECD PCGDP LIFEEX GINI ODA POP

# ABW 61 61 61 61 61 61 61 61 32 60 0 20 60

# AFG 61 61 61 61 61 61 61 61 18 60 0 60 60

# AGO 61 61 61 61 61 61 61 61 40 60 3 58 60

# ALB 61 61 61 61 61 61 61 61 40 60 9 32 60

# AND 61 61 61 61 61 61 61 61 50 0 0 0 60

# ARE 61 61 61 61 61 61 61 61 45 60 2 45 60

head(fndistinct(wlddev, wlddev$iso3c))

# country iso3c date year decade region income OECD PCGDP LIFEEX GINI ODA POP

# ABW 1 1 61 61 7 1 1 1 32 60 0 20 60

# AFG 1 1 61 61 7 1 1 1 18 60 0 60 60

# AGO 1 1 61 61 7 1 1 1 40 59 3 58 60

# ALB 1 1 61 61 7 1 1 1 40 59 9 32 60

# AND 1 1 61 61 7 1 1 1 50 0 0 0 60

# ARE 1 1 61 61 7 1 1 1 45 60 2 45 60Note that varying is more efficient than

fndistinct, although both functions are very fast. Even

more powerful summary methods for multilevel / panel data are provided

by qsu (shorthand for quick-summary). It is

modeled after STATA’s summarize and

xtsummarize commands. Calling qsu on the data

gives a concise summary. We can subset columns internally using the

cols argument:

qsu(wlddev, cols = 9:12, higher = TRUE) # higher adds skewness and kurtosis

# N Mean SD Min Max Skew Kurt

# PCGDP 9470 12048.778 19077.6416 132.0776 196061.417 3.1276 17.1154

# LIFEEX 11670 64.2963 11.4764 18.907 85.4171 -0.6748 2.6718

# GINI 1744 38.5341 9.2006 20.7 65.8 0.596 2.5329

# ODA 8608 454'720131 868'712654 -997'679993 2.56715605e+10 6.9832 114.889We could easily compute these statistics by region:

qsu(wlddev, by = ~region, cols = 9:12, vlabels = TRUE, higher = TRUE)

# , , PCGDP: GDP per capita (constant 2010 US$)

#

# N Mean SD Min Max Skew Kurt

# East Asia & Pacific 1467 10513.2441 14383.5507 132.0776 71992.1517 1.6392 4.7419

# Europe & Central Asia 2243 25992.9618 26435.1316 366.9354 196061.417 2.2022 10.1977

# Latin America & Caribbean 1976 7628.4477 8818.5055 1005.4085 88391.3331 4.1702 29.3739

# Middle East & North Africa 842 13878.4213 18419.7912 578.5996 116232.753 2.4178 9.7669

# North America 180 48699.76 24196.2855 16405.9053 113236.091 0.938 2.9688

# South Asia 382 1235.9256 1611.2232 265.9625 8476.564 2.7874 10.3402

# Sub-Saharan Africa 2380 1840.0259 2596.0104 164.3366 20532.9523 3.1161 14.4175

#

# , , LIFEEX: Life expectancy at birth, total (years)

#

# N Mean SD Min Max Skew Kurt

# East Asia & Pacific 1807 65.9445 10.1633 18.907 85.078 -0.856 4.3125

# Europe & Central Asia 3046 72.1625 5.7602 45.369 85.4171 -0.5594 4.0434

# Latin America & Caribbean 2107 68.3486 7.3768 41.762 82.1902 -1.0357 3.9379

# Middle East & North Africa 1226 66.2508 9.8306 29.919 82.8049 -0.8782 3.3054

# North America 144 76.2867 3.5734 68.8978 82.0488 -0.1963 1.976

# South Asia 480 57.5585 11.3004 32.446 78.921 -0.2623 2.1147

# Sub-Saharan Africa 2860 51.581 8.6876 26.172 74.5146 0.1452 2.7245

#

# , , GINI: Gini index (World Bank estimate)

#

# N Mean SD Min Max Skew Kurt

# East Asia & Pacific 154 37.7571 5.0318 27.8 49.1 0.3631 2.3047

# Europe & Central Asia 798 31.9114 4.5809 20.7 48.4 0.2989 2.5254

# Latin America & Caribbean 413 49.9557 5.4821 34.4 63.3 -0.0386 2.3631

# Middle East & North Africa 91 36.0143 5.2073 26 47.4 0.0241 1.9209

# North America 49 37.4816 3.6972 31 41.5 -0.4282 1.4577

# South Asia 46 33.8804 3.9898 25.9 43.8 0.4205 2.7748

# Sub-Saharan Africa 193 44.6606 8.2003 29.8 65.8 0.6598 2.8451

#

# , , ODA: Net official development assistance and official aid received (constant 2018 US$)

#

# N Mean SD Min Max

# East Asia & Pacific 1537 352'017964 622'847624 -997'679993 4.04487988e+09

# Europe & Central Asia 787 402'455286 568'237036 -322'070007 4.34612988e+09

# Latin America & Caribbean 1972 172'880081 260'781049 -444'040009 2.99568994e+09

# Middle East & North Africa 1105 732'380009 1.52108993e+09 -141'789993 2.56715605e+10

# North America 39 468717.916 10'653560.8 -15'869999.9 61'509998.3

# South Asia 466 1.27049955e+09 1.61492889e+09 -247'369995 8.75425977e+09

# Sub-Saharan Africa 2702 486'371750 656'336230 -18'409999.8 1.18790801e+10

# Skew Kurt

# East Asia & Pacific 2.722 11.5221

# Europe & Central Asia 3.1305 15.2525

# Latin America & Caribbean 3.3259 22.4569

# Middle East & North Africa 6.6304 79.2238

# North America 4.8602 29.3092

# South Asia 1.7923 6.501

# Sub-Saharan Africa 4.5456 48.8447Computing summary statistics by country is of course also possible

but would be too much information. Fortunately qsu lets us

do something much more powerful:

qsu(wlddev, pid = ~ iso3c, cols = c(1,4,9:12), vlabels = TRUE, higher = TRUE)

# , , country: Country Name

#

# N/T Mean SD Min Max Skew Kurt

# Overall 13176 - - - - - -

# Between 216 - - - - - -

# Within 61 - - - - - -

#

# , , year: Year

#

# N/T Mean SD Min Max Skew Kurt

# Overall 13176 1990 17.6075 1960 2020 -0 1.7994

# Between 216 1990 0 1990 1990 - -

# Within 61 1990 17.6075 1960 2020 -0 1.7994

#

# , , PCGDP: GDP per capita (constant 2010 US$)

#

# N/T Mean SD Min Max Skew Kurt

# Overall 9470 12048.778 19077.6416 132.0776 196061.417 3.1276 17.1154

# Between 206 12962.6054 20189.9007 253.1886 141200.38 3.1263 16.2299

# Within 45.9709 12048.778 6723.6808 -33504.8721 76767.5254 0.6576 17.2003

#

# , , LIFEEX: Life expectancy at birth, total (years)

#

# N/T Mean SD Min Max Skew Kurt

# Overall 11670 64.2963 11.4764 18.907 85.4171 -0.6748 2.6718

# Between 207 64.9537 9.8936 40.9663 85.4171 -0.5012 2.1693

# Within 56.3768 64.2963 6.0842 32.9068 84.4198 -0.2643 3.7027

#

# , , GINI: Gini index (World Bank estimate)

#

# N/T Mean SD Min Max Skew Kurt

# Overall 1744 38.5341 9.2006 20.7 65.8 0.596 2.5329

# Between 167 39.4233 8.1356 24.8667 61.7143 0.5832 2.8256

# Within 10.4431 38.5341 2.9277 25.3917 55.3591 0.3263 5.3389

#

# , , ODA: Net official development assistance and official aid received (constant 2018 US$)

#

# N/T Mean SD Min Max Skew Kurt

# Overall 8608 454'720131 868'712654 -997'679993 2.56715605e+10 6.9832 114.889

# Between 178 439'168412 569'049959 468717.916 3.62337432e+09 2.355 9.9487

# Within 48.3596 454'720131 650'709624 -2.44379420e+09 2.45610972e+10 9.6047 263.3716The above output reports 3 sets of summary statistics for each variable: Statistics computed on the Overall (raw) data, and on the Between-country (i.e. country averaged) and Within-country (i.e. country-demeaned) data1. This is a powerful way to summarize panel data because aggregating the data by country gives us a cross-section of countries with no variation over time, whereas subtracting country specific means from the data eliminates all cross-sectional variation.

So what can these statistics tell us about our data? The

N/T columns shows that for PCGDP we have 8995

total observations, that we observe GDP data for 203 countries and that

we have on average 44.3 observations (time-periods) per country. In

contrast the GINI Index is only available for 161 countries with 8.4

observations on average. The Overall and Within mean

of the data are identical by definition, and the Between mean

would also be the same in a balanced panel with no missing observations.

In practice we have unequal amounts of observations for different

countries, thus countries have different weights in the Overall

mean and the difference between Overall and

Between-country mean reflects this discrepancy. The most

interesting statistic in this summary arguably is the standard

deviation, and in particular the comparison of the Between-SD

reflecting the variation between countries and the Within-SD

reflecting average variation over time. This comparison shows that

PCGDP, LIFEEX and GINI vary more between countries, but ODA received

varies more within countries over time. The 0 Between-SD for

the year variable and the fact that the Overall and

Within-SD are equal shows that year is individual invariant.

Thus qsu also provides the same information as

varying, but with additional details on the relative

magnitudes of cross-sectional and time series variation. It is also a

common pattern that the kurtosis increases in

within-transformed data, while the skewness decreases in most

cases.

We could also do all of that by regions to have a look at the between and within country variations inside and across different World regions:

qsu(wlddev, by = ~ region, pid = ~ iso3c, cols = 9:12, vlabels = TRUE, higher = TRUE)

# , , Overall, PCGDP: GDP per capita (constant 2010 US$)

#

# N/T Mean SD Min Max Skew Kurt

# East Asia & Pacific 1467 10513.2441 14383.5507 132.0776 71992.1517 1.6392 4.7419

# Europe & Central Asia 2243 25992.9618 26435.1316 366.9354 196061.417 2.2022 10.1977

# Latin America & Caribbean 1976 7628.4477 8818.5055 1005.4085 88391.3331 4.1702 29.3739

# Middle East & North Africa 842 13878.4213 18419.7912 578.5996 116232.753 2.4178 9.7669

# North America 180 48699.76 24196.2855 16405.9053 113236.091 0.938 2.9688

# South Asia 382 1235.9256 1611.2232 265.9625 8476.564 2.7874 10.3402

# Sub-Saharan Africa 2380 1840.0259 2596.0104 164.3366 20532.9523 3.1161 14.4175

#

# , , Between, PCGDP: GDP per capita (constant 2010 US$)

#

# N/T Mean SD Min Max Skew Kurt

# East Asia & Pacific 34 10513.2441 12771.742 444.2899 39722.0077 1.1488 2.7089

# Europe & Central Asia 56 25992.9618 24051.035 809.4753 141200.38 2.0026 9.0733

# Latin America & Caribbean 38 7628.4477 8470.9708 1357.3326 77403.7443 4.4548 32.4956

# Middle East & North Africa 20 13878.4213 17251.6962 1069.6596 64878.4021 1.9508 6.0796

# North America 3 48699.76 18604.4369 35260.4708 74934.5874 0.7065 1.5

# South Asia 8 1235.9256 1488.3669 413.68 6621.5002 3.0546 11.3083

# Sub-Saharan Africa 47 1840.0259 2234.3254 253.1886 9922.0052 2.1442 6.8259

#

# , , Within, PCGDP: GDP per capita (constant 2010 US$)

#

# N/T Mean SD Min Max Skew

# East Asia & Pacific 43.1471 12048.778 6615.8248 -11964.6472 49541.463 0.824

# Europe & Central Asia 40.0536 12048.778 10971.0483 -33504.8721 76767.5254 0.4307

# Latin America & Caribbean 52 12048.778 2451.2636 -354.1639 23036.3668 0.1259

# Middle East & North Africa 42.1 12048.778 6455.0512 -18674.4049 63665.0446 1.8525

# North America 60 12048.778 15470.4609 -29523.1017 50350.2816 -0.2451

# South Asia 47.75 12048.778 617.0934 10026.9155 14455.865 0.9846

# Sub-Saharan Africa 50.6383 12048.778 1321.764 4846.3834 24883.1246 1.3879

# Kurt

# East Asia & Pacific 8.9418

# Europe & Central Asia 7.4139

# Latin America & Caribbean 7.1939

# Middle East & North Africa 23.0457

# North America 3.2075

# South Asia 5.6366

# Sub-Saharan Africa 28.0186

#

# , , Overall, LIFEEX: Life expectancy at birth, total (years)

#

# N/T Mean SD Min Max Skew Kurt

# East Asia & Pacific 1807 65.9445 10.1633 18.907 85.078 -0.856 4.3125

# Europe & Central Asia 3046 72.1625 5.7602 45.369 85.4171 -0.5594 4.0434

# Latin America & Caribbean 2107 68.3486 7.3768 41.762 82.1902 -1.0357 3.9379

# Middle East & North Africa 1226 66.2508 9.8306 29.919 82.8049 -0.8782 3.3054

# North America 144 76.2867 3.5734 68.8978 82.0488 -0.1963 1.976

# South Asia 480 57.5585 11.3004 32.446 78.921 -0.2623 2.1147

# Sub-Saharan Africa 2860 51.581 8.6876 26.172 74.5146 0.1452 2.7245

#

# , , Between, LIFEEX: Life expectancy at birth, total (years)

#

# N/T Mean SD Min Max Skew Kurt

# East Asia & Pacific 32 65.9445 7.6833 49.7995 77.9008 -0.3832 2.4322

# Europe & Central Asia 55 72.1625 4.4378 60.1129 85.4171 -0.6584 2.8874

# Latin America & Caribbean 40 68.3486 4.9199 53.4918 82.1902 -0.9947 4.1617

# Middle East & North Africa 21 66.2508 5.922 52.5371 76.7395 -0.3181 3.0331

# North America 3 76.2867 1.3589 74.8065 78.4175 0.1467 1.6356

# South Asia 8 57.5585 5.6158 49.1972 69.3429 0.6643 3.1288

# Sub-Saharan Africa 48 51.581 5.657 40.9663 71.5749 1.1333 4.974

#

# , , Within, LIFEEX: Life expectancy at birth, total (years)

#

# N/T Mean SD Min Max Skew Kurt

# East Asia & Pacific 56.4688 64.2963 6.6528 32.9068 83.9918 -0.3949 3.9528

# Europe & Central Asia 55.3818 64.2963 3.6723 46.3045 78.6265 -0.0307 3.7576

# Latin America & Caribbean 52.675 64.2963 5.4965 46.7831 79.5026 -0.3827 2.9936

# Middle East & North Africa 58.381 64.2963 7.8467 41.6187 78.8872 -0.6216 2.808

# North America 48 64.2963 3.3049 54.7766 69.4306 -0.4327 2.3027

# South Asia 60 64.2963 9.8062 41.4342 83.0122 -0.0946 2.1035

# Sub-Saharan Africa 59.5833 64.2963 6.5933 41.5678 84.4198 0.0811 2.7821

#

# , , Overall, GINI: Gini index (World Bank estimate)

#

# N/T Mean SD Min Max Skew Kurt

# East Asia & Pacific 154 37.7571 5.0318 27.8 49.1 0.3631 2.3047

# Europe & Central Asia 798 31.9114 4.5809 20.7 48.4 0.2989 2.5254

# Latin America & Caribbean 413 49.9557 5.4821 34.4 63.3 -0.0386 2.3631

# Middle East & North Africa 91 36.0143 5.2073 26 47.4 0.0241 1.9209

# North America 49 37.4816 3.6972 31 41.5 -0.4282 1.4577

# South Asia 46 33.8804 3.9898 25.9 43.8 0.4205 2.7748

# Sub-Saharan Africa 193 44.6606 8.2003 29.8 65.8 0.6598 2.8451

#

# , , Between, GINI: Gini index (World Bank estimate)

#

# N/T Mean SD Min Max Skew Kurt

# East Asia & Pacific 23 37.7571 4.3005 30.8 45.8857 0.4912 2.213

# Europe & Central Asia 49 31.9114 4.0611 24.8667 40.935 0.3323 2.291

# Latin America & Caribbean 25 49.9557 4.0492 41.1 57.9 0.03 2.2573

# Middle East & North Africa 15 36.0143 4.7002 29.05 42.7 -0.2035 1.6815

# North America 2 37.4816 3.3563 33.1222 40.0129 -0.5503 1.3029

# South Asia 7 33.8804 3.0052 30.3556 38.8 0.2786 1.4817

# Sub-Saharan Africa 46 44.6606 6.8844 34.52 61.7143 0.9464 3.2302

#

# , , Within, GINI: Gini index (World Bank estimate)

#

# N/T Mean SD Min Max Skew Kurt

# East Asia & Pacific 6.6957 38.5341 2.6125 31.0187 45.8901 -0.0585 3.0933

# Europe & Central Asia 16.2857 38.5341 2.1195 31.2841 50.1387 0.6622 6.1763

# Latin America & Caribbean 16.52 38.5341 3.6955 25.3917 48.8341 -0.0506 2.7603

# Middle East & North Africa 6.0667 38.5341 2.2415 31.7675 45.777 0.0408 4.7415

# North America 24.5 38.5341 1.5507 33.0212 42.7119 -1.3213 6.8321

# South Asia 6.5714 38.5341 2.6244 32.8341 45.0675 -0.1055 2.6885

# Sub-Saharan Africa 4.1957 38.5341 4.4553 27.9452 55.3591 0.6338 4.4174

#

# , , Overall, ODA: Net official development assistance and official aid received (constant 2018 US$)

#

# N/T Mean SD Min Max

# East Asia & Pacific 1537 352'017964 622'847624 -997'679993 4.04487988e+09

# Europe & Central Asia 787 402'455286 568'237036 -322'070007 4.34612988e+09

# Latin America & Caribbean 1972 172'880081 260'781049 -444'040009 2.99568994e+09

# Middle East & North Africa 1105 732'380009 1.52108993e+09 -141'789993 2.56715605e+10

# North America 39 468717.916 10'653560.8 -15'869999.9 61'509998.3

# South Asia 466 1.27049955e+09 1.61492889e+09 -247'369995 8.75425977e+09

# Sub-Saharan Africa 2702 486'371750 656'336230 -18'409999.8 1.18790801e+10

# Skew Kurt

# East Asia & Pacific 2.722 11.5221

# Europe & Central Asia 3.1305 15.2525

# Latin America & Caribbean 3.3259 22.4569

# Middle East & North Africa 6.6304 79.2238

# North America 4.8602 29.3092

# South Asia 1.7923 6.501

# Sub-Saharan Africa 4.5456 48.8447

#

# , , Between, ODA: Net official development assistance and official aid received (constant 2018 US$)

#

# N/T Mean SD Min Max

# East Asia & Pacific 31 352'017964 457'183279 1'654615.38 1.63585532e+09

# Europe & Central Asia 32 402'455286 438'074771 12'516000.1 2.05456932e+09

# Latin America & Caribbean 37 172'880081 167'160838 2'225483.88 538'386665

# Middle East & North Africa 21 732'380009 775'418887 3'112820.5 2.86174883e+09

# North America 1 468717.916 0 468717.916 468717.916

# South Asia 8 1.27049955e+09 1.18347893e+09 27'152499.9 3.62337432e+09

# Sub-Saharan Africa 48 486'371750 397'995105 28'801206.9 1.55049113e+09

# Skew Kurt

# East Asia & Pacific 1.7771 5.1361

# Europe & Central Asia 2.0449 7.2489

# Latin America & Caribbean 0.8981 2.4954

# Middle East & North Africa 1.1363 3.6377

# North America - -

# South Asia 0.7229 2.4072

# Sub-Saharan Africa 0.9871 3.1513

#

# , , Within, ODA: Net official development assistance and official aid received (constant 2018 US$)

#

# N/T Mean SD Min Max

# East Asia & Pacific 49.5806 454'720131 422'992450 -2.04042108e+09 3.59673152e+09

# Europe & Central Asia 24.5938 454'720131 361'916875 -1.08796786e+09 3.30549004e+09

# Latin America & Caribbean 53.2973 454'720131 200'159960 -527'706542 3.28976141e+09

# Middle East & North Africa 52.619 454'720131 1.30860235e+09 -2.34610870e+09 2.45610972e+10

# North America 39 454'720131 10'653560.8 438'381413 515'761411

# South Asia 58.25 454'720131 1.09880524e+09 -2.44379420e+09 5.58560558e+09

# Sub-Saharan Africa 56.2917 454'720131 521'897637 -952'168698 1.12814455e+10

# Skew Kurt

# East Asia & Pacific 0.2908 14.4428

# Europe & Central Asia 2.3283 18.6937

# Latin America & Caribbean 3.7015 41.7506

# Middle East & North Africa 7.8663 117.987

# North America 4.8602 29.3092

# South Asia 1.8418 9.4588

# Sub-Saharan Africa 5.2349 86.1042Notice that the output here is a 4D array of summary statistics,

which we could also subset ([) or permute

(aperm) to view these statistics in any convenient way. If

we don’t like the array, we can also output as a nested list of

statistics matrices:

l <- qsu(wlddev, by = ~ region, pid = ~ iso3c, cols = 9:12, vlabels = TRUE,

higher = TRUE, array = FALSE)

str(l, give.attr = FALSE)

# List of 4

# $ PCGDP: GDP per capita (constant 2010 US$) :List of 3

# ..$ Overall: 'qsu' num [1:7, 1:7] 1467 2243 1976 842 180 ...

# ..$ Between: 'qsu' num [1:7, 1:7] 34 56 38 20 3 ...

# ..$ Within : 'qsu' num [1:7, 1:7] 43.1 40.1 52 42.1 60 ...

# $ LIFEEX: Life expectancy at birth, total (years) :List of 3

# ..$ Overall: 'qsu' num [1:7, 1:7] 1807 3046 2107 1226 144 ...

# ..$ Between: 'qsu' num [1:7, 1:7] 32 55 40 21 3 ...

# ..$ Within : 'qsu' num [1:7, 1:7] 56.5 55.4 52.7 58.4 48 ...

# $ GINI: Gini index (World Bank estimate) :List of 3

# ..$ Overall: 'qsu' num [1:7, 1:7] 154 798 413 91 49 ...

# ..$ Between: 'qsu' num [1:7, 1:7] 23 49 25 15 2 ...

# ..$ Within : 'qsu' num [1:7, 1:7] 6.7 16.29 16.52 6.07 24.5 ...

# $ ODA: Net official development assistance and official aid received (constant 2018 US$):List of 3

# ..$ Overall: 'qsu' num [1:7, 1:7] 1537 787 1972 1105 39 ...

# ..$ Between: 'qsu' num [1:7, 1:7] 31 32 37 21 1 ...

# ..$ Within : 'qsu' num [1:7, 1:7] 49.6 24.6 53.3 52.6 39 ...Such a list of statistics matrices could, for example, be converted

into a tidy data frame using unlist2d (more about this in

the section on list-processing):

head(unlist2d(l, idcols = c("Variable", "Trans"), row.names = "Region"))

# Variable Trans Region N Mean

# 1 PCGDP: GDP per capita (constant 2010 US$) Overall East Asia & Pacific 1467 10513.244

# 2 PCGDP: GDP per capita (constant 2010 US$) Overall Europe & Central Asia 2243 25992.962

# 3 PCGDP: GDP per capita (constant 2010 US$) Overall Latin America & Caribbean 1976 7628.448

# 4 PCGDP: GDP per capita (constant 2010 US$) Overall Middle East & North Africa 842 13878.421

# 5 PCGDP: GDP per capita (constant 2010 US$) Overall North America 180 48699.760

# 6 PCGDP: GDP per capita (constant 2010 US$) Overall South Asia 382 1235.926

# SD Min Max Skew Kurt

# 1 14383.551 132.0776 71992.152 1.6392248 4.741856

# 2 26435.132 366.9354 196061.417 2.2022472 10.197685

# 3 8818.505 1005.4085 88391.333 4.1701769 29.373869

# 4 18419.791 578.5996 116232.753 2.4177586 9.766883

# 5 24196.285 16405.9053 113236.091 0.9380056 2.968769

# 6 1611.223 265.9625 8476.564 2.7873830 10.340176This is not yet end of qsu’s functionality, as we can

also do all of the above on panel-surveys utilizing weights

(w argument).

Finally, we can look at (weighted) pairwise correlations in this data:

pwcor(wlddev[9:12], N = TRUE, P = TRUE)

# PCGDP LIFEEX GINI ODA

# PCGDP 1 (9470) .57* (9022) -.44* (1735) -.16* (7128)

# LIFEEX .57* (9022) 1 (11670) -.35* (1742) -.02 (8142)

# GINI -.44* (1735) -.35* (1742) 1 (1744) -.20* (1109)

# ODA -.16* (7128) -.02 (8142) -.20* (1109) 1 (8608)which can of course also be computed on averaged and within-transformed data:

print(pwcor(fmean(wlddev[9:12], wlddev$iso3c), N = TRUE, P = TRUE), show = "lower.tri")

# PCGDP LIFEEX GINI ODA

# PCGDP 1 (206)

# LIFEEX .60* (199) 1 (207)

# GINI -.42* (165) -.40* (165) 1 (167)

# ODA -.25* (172) -.21* (172) -.19* (145) 1 (178)

# N is same as overall N shown above...

print(pwcor(fwithin(wlddev[9:12], wlddev$iso3c), P = TRUE), show = "lower.tri")

# PCGDP LIFEEX GINI ODA

# PCGDP 1

# LIFEEX .31* 1

# GINI -.01 -.16* 1

# ODA -.01 .17* -.08* 1A useful function called by pwcor is

pwnobs, which is very handy to explore the joint

observation structure when selecting variables to include in a

statistical model:

pwnobs(wlddev)

# country iso3c date year decade region income OECD PCGDP LIFEEX GINI ODA POP

# country 13176 13176 13176 13176 13176 13176 13176 13176 9470 11670 1744 8608 12919

# iso3c 13176 13176 13176 13176 13176 13176 13176 13176 9470 11670 1744 8608 12919

# date 13176 13176 13176 13176 13176 13176 13176 13176 9470 11670 1744 8608 12919

# year 13176 13176 13176 13176 13176 13176 13176 13176 9470 11670 1744 8608 12919

# decade 13176 13176 13176 13176 13176 13176 13176 13176 9470 11670 1744 8608 12919

# region 13176 13176 13176 13176 13176 13176 13176 13176 9470 11670 1744 8608 12919

# income 13176 13176 13176 13176 13176 13176 13176 13176 9470 11670 1744 8608 12919

# OECD 13176 13176 13176 13176 13176 13176 13176 13176 9470 11670 1744 8608 12919

# PCGDP 9470 9470 9470 9470 9470 9470 9470 9470 9470 9022 1735 7128 9470

# LIFEEX 11670 11670 11670 11670 11670 11670 11670 11670 9022 11670 1742 8142 11659

# GINI 1744 1744 1744 1744 1744 1744 1744 1744 1735 1742 1744 1109 1744

# ODA 8608 8608 8608 8608 8608 8608 8608 8608 7128 8142 1109 8608 8597

# POP 12919 12919 12919 12919 12919 12919 12919 12919 9470 11659 1744 8597 12919Note that both pwcor/pwcov and pwnobs are

faster on matrices.

1.2 GGDC10S - GGDC 10-Sector Database

The Groningen Growth and Development Centre 10-Sector Database provides long-run data on sectoral productivity performance in Africa, Asia, and Latin America. Variables covered in the data set are annual series of value added (VA, in local currency), and persons employed (EMP) for 10 broad sectors.

head(GGDC10S)

# Country Regioncode Region Variable Year AGR MIN MAN PU

# 1 BWA SSA Sub-saharan Africa VA 1960 NA NA NA NA

# 2 BWA SSA Sub-saharan Africa VA 1961 NA NA NA NA

# 3 BWA SSA Sub-saharan Africa VA 1962 NA NA NA NA

# 4 BWA SSA Sub-saharan Africa VA 1963 NA NA NA NA

# 5 BWA SSA Sub-saharan Africa VA 1964 16.30154 3.494075 0.7365696 0.1043936

# 6 BWA SSA Sub-saharan Africa VA 1965 15.72700 2.495768 1.0181992 0.1350976

# CON WRT TRA FIRE GOV OTH SUM

# 1 NA NA NA NA NA NA NA

# 2 NA NA NA NA NA NA NA

# 3 NA NA NA NA NA NA NA

# 4 NA NA NA NA NA NA NA

# 5 0.6600454 6.243732 1.658928 1.119194 4.822485 2.341328 37.48229

# 6 1.3462312 7.064825 1.939007 1.246789 5.695848 2.678338 39.34710

namlab(GGDC10S, class = TRUE)

# Variable Class Label

# 1 Country character Country

# 2 Regioncode character Region code

# 3 Region character Region

# 4 Variable character Variable

# 5 Year numeric Year

# 6 AGR numeric Agriculture

# 7 MIN numeric Mining

# 8 MAN numeric Manufacturing

# 9 PU numeric Utilities

# 10 CON numeric Construction

# 11 WRT numeric Trade, restaurants and hotels

# 12 TRA numeric Transport, storage and communication

# 13 FIRE numeric Finance, insurance, real estate and business services

# 14 GOV numeric Government services

# 15 OTH numeric Community, social and personal services

# 16 SUM numeric Summation of sector GDP

fnobs(GGDC10S)

# Country Regioncode Region Variable Year AGR MIN MAN PU

# 5027 5027 5027 5027 5027 4364 4355 4355 4354

# CON WRT TRA FIRE GOV OTH SUM

# 4355 4355 4355 4355 3482 4248 4364

fndistinct(GGDC10S)

# Country Regioncode Region Variable Year AGR MIN MAN PU

# 43 6 6 2 67 4353 4224 4353 4237

# CON WRT TRA FIRE GOV OTH SUM

# 4339 4344 4334 4349 3470 4238 4364

# The countries included:

cat(funique(GGDC10S$Country, sort = TRUE))

# ARG BOL BRA BWA CHL CHN COL CRI DEW DNK EGY ESP ETH FRA GBR GHA HKG IDN IND ITA JPN KEN KOR MEX MOR MUS MWI MYS NGA NGA(alt) NLD PER PHL SEN SGP SWE THA TWN TZA USA VEN ZAF ZMBThe first problem in summarizing this data is that value added (VA)

is in local currency, the second that it contains 2 different Variables

(VA and EMP) stacked in the same column. One way of solving the first

problem could be converting the data to percentages through dividing by

the overall VA and EMP contained in the last column. A different

solution involving grouped-scaling is introduced in section 6.4. The

second problem is again nicely handled by qsu, which can

also compute panel-statistics by groups.

# Converting data to percentages of overall VA / EMP, dapply keeps the attributes, see section 6.1

pGGDC10S <- ftransformv(GGDC10S, 6:15, `*`, 100 / SUM)

# Summarizing the sectoral data by variable, overall, between and within countries

su <- qsu(pGGDC10S, by = ~ Variable, pid = ~ Variable + Country,

cols = 6:16, higher = TRUE)

# This gives a 4D array of summary statistics

str(su)

# 'qsu' num [1:2, 1:7, 1:3, 1:11] 2225 2139 35.1 17.3 26.7 ...

# - attr(*, "dimnames")=List of 4

# ..$ : chr [1:2] "EMP" "VA"

# ..$ : chr [1:7] "N/T" "Mean" "SD" "Min" ...

# ..$ : chr [1:3] "Overall" "Between" "Within"

# ..$ : chr [1:11] "AGR" "MIN" "MAN" "PU" ...

# Permuting this array to a more readible format

aperm(su, c(4L, 2L, 3L, 1L))

# , , Overall, EMP

#

# N/T Mean SD Min Max Skew Kurt

# AGR 2225 35.0949 26.7235 0.156 100 0.4856 2.0951

# MIN 2216 1.0349 1.4247 0.0043 9.4097 3.1281 15.0429

# MAN 2216 14.9768 8.0392 0.5822 45.2974 0.4272 2.8455

# PU 2215 0.5782 0.3601 0.0154 2.4786 1.2588 5.5822

# CON 2216 5.6583 2.9252 0.1417 15.9887 -0.0631 2.2725

# WRT 2216 14.9155 6.5573 0.809 32.8046 -0.1814 2.3226

# TRA 2216 4.8193 2.652 0.1506 15.0454 0.9477 4.4695

# FIRE 2216 4.6501 4.3518 0.0799 21.7717 1.2345 4.0831

# GOV 1780 13.1263 8.0844 0 34.8897 0.6301 2.5338

# OTH 2109 8.3977 6.6409 0.421 34.8942 1.4028 4.3191

# SUM 2225 36846.8741 96318.6544 173.8829 764200 5.0229 30.9814

#

# , , Between, EMP

#

# N/T Mean SD Min Max Skew Kurt

# AGR 42 35.0949 24.1204 0.9997 88.3263 0.5202 2.2437

# MIN 42 1.0349 1.2304 0.0296 6.8532 2.7313 12.331

# MAN 42 14.9768 7.0375 1.718 32.3439 -0.0164 2.4321

# PU 42 0.5782 0.3041 0.0671 1.3226 0.5459 2.6905

# CON 42 5.6583 2.4748 0.5037 10.3691 -0.4442 2.3251

# WRT 42 14.9155 5.264 4.0003 26.7699 -0.5478 2.7294

# TRA 42 4.8193 2.4712 0.374 12.3887 0.9782 4.7857

# FIRE 42 4.6501 3.4468 0.1505 12.4402 0.6052 2.5883

# GOV 34 13.1263 7.2832 2.0086 29.1577 0.3858 2.1068

# OTH 40 8.3977 6.266 1.3508 26.4036 1.4349 4.3185

# SUM 42 36846.8741 89205.503 369.2353 485820.474 4.0761 19.3159

#

# , , Within, EMP

#

# N/T Mean SD Min Max Skew Kurt

# AGR 52.9762 26.3768 11.5044 -5.3234 107.4891 1.6002 11.9683

# MIN 52.7619 3.4006 0.7182 -1.4068 7.509 -0.1988 15.0343

# MAN 52.7619 17.476 3.8861 -1.1061 40.3964 -0.082 7.3994

# PU 52.7381 1.3896 0.1929 0.6346 2.5461 0.5731 7.8523

# CON 52.7619 5.7633 1.5596 0.8964 12.9663 0.3077 4.1248

# WRT 52.7619 15.7581 3.91 3.7356 29.7615 0.3339 3.3386

# TRA 52.7619 6.3486 0.9623 2.3501 11.1064 0.2671 5.7162

# FIRE 52.7619 5.8228 2.6567 -2.9836 15.9974 0.5486 4.0288

# GOV 52.3529 13.263 3.5088 -2.1983 23.611 -0.5647 4.7286

# OTH 52.725 7.3941 2.1999 -2.3286 17.4413 0.2929 6.4631

# SUM 52.9762 21'566436.8 36327.1443 21'287906.3 21'844816.3 0.6649 34.2495

#

# , , Overall, VA

#

# N/T Mean SD Min Max Skew Kurt

# AGR 2139 17.3082 15.5066 0.0318 95.222 1.3274 4.8827

# MIN 2139 5.8514 9.0975 0 59.0602 2.7193 10.9184

# MAN 2139 20.0651 8.0033 0.979 41.6281 -0.0348 2.6831

# PU 2139 2.2298 1.1088 0 9.1888 0.8899 6.2385

# CON 2139 5.8721 2.5113 0.5951 25.8575 1.5002 8.9578

# WRT 2139 16.631 5.1374 4.5187 39.7594 0.3455 3.2655

# TRA 2139 7.9329 3.1057 0.7957 25.9625 1.0122 5.7137

# FIRE 2139 7.0377 12.7077 -151.065 39.1705 -6.2254 59.8739

# GOV 1702 13.406 6.3521 0.7607 32.5107 0.4888 2.9043

# OTH 2139 6.4046 5.8416 0.2327 31.4474 1.4978 4.2051

# SUM 2139 43'961639.1 358'350627 0 8.06794210e+09 15.7682 289.4632

#

# , , Between, VA

#

# N/T Mean SD Min Max Skew Kurt

# AGR 43 17.3082 13.1901 0.6058 63.8364 1.1328 4.7111

# MIN 43 5.8514 7.5705 0.0475 27.9214 1.7113 4.807

# MAN 43 20.0651 6.6423 4.1869 32.1138 -0.3591 2.619

# PU 43 2.2298 0.7457 0.4462 4.307 0.6196 3.8724

# CON 43 5.8721 1.8455 2.9405 12.9279 1.3285 6.505

# WRT 43 16.631 4.3779 8.4188 26.3876 0.292 2.4553

# TRA 43 7.9329 2.7222 2.037 14.8892 0.6362 3.6686

# FIRE 43 7.0377 9.0284 -35.6144 23.8658 -2.674 15.0975

# GOV 35 13.406 5.875 1.9757 27.7714 0.5198 3.0416

# OTH 43 6.4046 5.6137 1.1184 19.5299 1.3274 3.2043

# SUM 43 43'961639.1 185'785836 5077.7231 1.23317892e+09 5.8098 36.9778

#

# , , Within, VA

#

# N/T Mean SD Min Max Skew Kurt

# AGR 49.7442 26.3768 8.1532 5.245 94.3499 1.234 9.5269

# MIN 49.7442 3.4006 5.0451 -20.051 35.7053 0.341 13.102

# MAN 49.7442 17.476 4.4647 1.1188 36.3501 -0.1928 3.9339

# PU 49.7442 1.3896 0.8206 -1.0904 6.2714 0.5258 5.3462

# CON 49.7442 5.7633 1.7031 -0.3464 18.6929 0.7493 6.3751

# WRT 49.7442 15.7581 2.6884 4.6513 32.6691 0.2338 4.4953

# TRA 49.7442 6.3486 1.4951 0.9187 18.5977 0.6995 10.1129

# FIRE 49.7442 5.8228 8.9428 -109.6278 54.1241 -2.7728 54.5971

# GOV 48.6286 13.263 2.4153 5.1249 22.8497 0.1663 3.3083

# OTH 49.7442 7.3941 1.6159 -0.9151 19.3116 0.7301 9.6613

# SUM 49.7442 21'566436.8 306'429102 -1.21124805e+09 6.85632962e+09 12.6639 253.1145The statistics show that the dataset is very consistent: Employment data cover 42 countries and 53 time-periods in almost all sectors. Agriculture is the largest sector in terms of employment, amounting to a 35% share of employment across countries and time, with a standard deviation (SD) of around 27%. The between-country SD in agricultural employment share is 24% and the within SD is 12%, indicating that processes of structural change are very gradual and most of the variation in structure is between countries. The next largest sectors after agriculture are manufacturing, wholesale and retail trade and government, each claiming an approx. 15% share of the economy. In these sectors the between-country SD is also about twice as large as the within-country SD.

In terms of value added, the data covers 43 countries in 50 time-periods. Agriculture, manufacturing, wholesale and retail trade and government are also the largest sectors in terms of VA, but with a diminished agricultural share (around 17%) and a greater share for manufacturing (around 20%). The variation between countries is again greater than the variation within countries, but it seems that at least in terms of agricultural VA share there is also a considerable within-country SD of 8%. This is also true for the finance and real estate sector with a within SD of 9%, suggesting (using a bit of common sense) that a diminishing VA share in agriculture and increased VA share in finance and real estate was a pattern characterizing most of the countries in this sample.

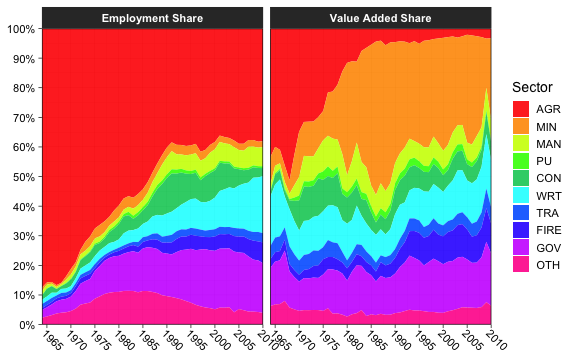

As a final step we consider a plot function which can be used to plot the structural transformation of any supported country. Below for Botswana:

library(data.table)

library(ggplot2)

library(magrittr)

plotGGDC <- function(ctry) {

# Select and subset

fsubset(GGDC10S, Country == ctry, Variable, Year, AGR:SUM) %>%

# Convert to shares and replace negative values with NA

ftransform(fselect(., AGR:OTH) %>%

lapply(`*`, 1 / SUM) %>%

replace_outliers(0, NA, "min")) %>%

# Remove totals column and make proper variable labels

ftransform(Variable = recode_char(Variable,

VA = "Value Added Share",

EMP = "Employment Share"),

SUM = NULL) %>%

# Fast conversion to data.table

qDT %>%

# data.table's melt function

melt(1:2, variable.name = "Sector", na.rm = TRUE) %>%

# ggplot with some scales provided by the 'scales' package

ggplot(aes(x = Year, y = value, fill = Sector)) +

geom_area(position = "fill", alpha = 0.9) + labs(x = NULL, y = NULL) +

theme_linedraw(base_size = 14L) + facet_wrap( ~ Variable) +

scale_fill_manual(values = sub("#00FF66", "#00CC66", rainbow(10L))) +

scale_x_continuous(breaks = scales::pretty_breaks(n = 7L), expand = c(0, 0)) +

scale_y_continuous(breaks = scales::pretty_breaks(n = 10L), expand = c(0, 0),

labels = scales::percent) +

theme(axis.text.x = element_text(angle = 315, hjust = 0, margin = ggplot2::margin(t = 0)),

strip.background = element_rect(colour = "grey20", fill = "grey20"),

strip.text = element_text(face = "bold"))

}

# Plotting the structural transformation of Botswana

plotGGDC("BWA")

plot of chunk scplot_BWA

2. Fast Data Manipulation

A lot of R code is not concerned with statistical computations but with preliminary data wrangling. For various reasons R development has focused on data frames as the main medium to contain data, although matrices / arrays provide significantly faster methods for common manipulations.

A first essential step towards optimizing R code is thus to speed up very frequent manipulations on data frames. collapse introduces a set of highly optimized functions to efficiently manipulate (mostly) data frames. Most manipulations can be conducted in non-standard evaluation or standard evaluation (utilizing different functions), and all functions preserve the data structure (i.e. they can be used with data.table, tbl_df, grouped_df, pdata.frame etc.).

2.1 Selecting and Replacing Columns

fselect is an analogue to dplyr::select,

but executes about 100x faster. It can be used to select variables using

expressions involving variable names:

library(magrittr) # Pipe operators

fselect(wlddev, country, year, PCGDP:ODA) %>% head(2)

# country year PCGDP LIFEEX GINI ODA

# 1 Afghanistan 1960 NA 32.446 NA 116769997

# 2 Afghanistan 1961 NA 32.962 NA 232080002

fselect(wlddev, -country, -year, -(PCGDP:ODA)) %>% head(2)

# iso3c date decade region income OECD POP

# 1 AFG 1961-01-01 1960 South Asia Low income FALSE 8996973

# 2 AFG 1962-01-01 1960 South Asia Low income FALSE 9169410

library(microbenchmark)

microbenchmark(fselect = collapse::fselect(wlddev, country, year, PCGDP:ODA),

select = dplyr::select(wlddev, country, year, PCGDP:ODA))

# Unit: microseconds

# expr min lq mean median uq max neval

# fselect 2.911 3.4645 4.76297 4.3665 5.3710 20.459 100

# select 382.284 393.0055 442.70734 410.3075 441.4265 2951.262 100in contrast to dplyr::select, fselect has a

replacement method

# Computing the log of columns

fselect(wlddev, PCGDP:POP) <- lapply(fselect(wlddev, PCGDP:POP), log)

head(wlddev, 2)

# country iso3c date year decade region income OECD PCGDP LIFEEX GINI ODA

# 1 Afghanistan AFG 1961-01-01 1960 1960 South Asia Low income FALSE NA 3.479577 NA 18.57572

# 2 Afghanistan AFG 1962-01-01 1961 1960 South Asia Low income FALSE NA 3.495355 NA 19.26259

# POP

# 1 16.01240

# 2 16.03138

# Efficient deleting

fselect(wlddev, country, year, PCGDP:POP) <- NULL

head(wlddev, 2)

# iso3c date decade region income OECD

# 1 AFG 1961-01-01 1960 South Asia Low income FALSE

# 2 AFG 1962-01-01 1960 South Asia Low income FALSE

rm(wlddev)and it can also return information about the selected columns other than the data itself.

fselect(wlddev, PCGDP:POP, return = "names")

# [1] "PCGDP" "LIFEEX" "GINI" "ODA" "POP"

fselect(wlddev, PCGDP:POP, return = "indices")

# [1] 9 10 11 12 13

fselect(wlddev, PCGDP:POP, return = "named_indices")

# PCGDP LIFEEX GINI ODA POP

# 9 10 11 12 13

fselect(wlddev, PCGDP:POP, return = "logical")

# [1] FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE TRUE TRUE TRUE TRUE TRUE

fselect(wlddev, PCGDP:POP, return = "named_logical")

# country iso3c date year decade region income OECD PCGDP LIFEEX GINI ODA

# FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE TRUE TRUE TRUE TRUE

# POP

# TRUEWhile fselect is faster than dplyr::select,

it is also simpler and does not offer special methods for grouped

tibbles (e.g. where grouping columns are always selected) and some other

dplyr-specific features of select. We will see

that this is not a problem at all when working with statistical

functions in collapse that have a grouped_df method, but users

should be careful replacing dplyr::select with

fselect in dplyr scripts. From collapse

1.6.0, fselect has explicit support for sf data

frames.

The standard-evaluation analogue to fselect is the

function get_vars. get_vars can be used to

select variables using names, indices, logical vectors, functions or

regular expressions evaluated against column names:

get_vars(wlddev, 9:13) %>% head(1)

# PCGDP LIFEEX GINI ODA POP

# 1 NA 32.446 NA 116769997 8996973

get_vars(wlddev, c("PCGDP","LIFEEX","GINI","ODA","POP")) %>% head(1)

# PCGDP LIFEEX GINI ODA POP

# 1 NA 32.446 NA 116769997 8996973

get_vars(wlddev, "[[:upper:]]", regex = TRUE) %>% head(1)

# OECD PCGDP LIFEEX GINI ODA POP

# 1 FALSE NA 32.446 NA 116769997 8996973

get_vars(wlddev, "PC|LI|GI|OD|PO", regex = TRUE) %>% head(1)

# PCGDP LIFEEX GINI ODA POP

# 1 NA 32.446 NA 116769997 8996973

# Same as above, vectors of regular expressions are sequentially passed to grep

get_vars(wlddev, c("PC","LI","GI","OD","PO"), regex = TRUE) %>% head(1)

# PCGDP LIFEEX GINI ODA POP

# 1 NA 32.446 NA 116769997 8996973

get_vars(wlddev, is.numeric) %>% head(1)

# year decade PCGDP LIFEEX GINI ODA POP

# 1 1960 1960 NA 32.446 NA 116769997 8996973

# Returning other information

get_vars(wlddev, is.numeric, return = "names")

# [1] "year" "decade" "PCGDP" "LIFEEX" "GINI" "ODA" "POP"

get_vars(wlddev, "[[:upper:]]", regex = TRUE, return = "named_indices")

# OECD PCGDP LIFEEX GINI ODA POP

# 8 9 10 11 12 13Replacing operations are conducted analogous:

get_vars(wlddev, 9:13) <- lapply(get_vars(wlddev, 9:13), log)

get_vars(wlddev, 9:13) <- NULL

head(wlddev, 2)

# country iso3c date year decade region income OECD

# 1 Afghanistan AFG 1961-01-01 1960 1960 South Asia Low income FALSE

# 2 Afghanistan AFG 1962-01-01 1961 1960 South Asia Low income FALSE

rm(wlddev)get_vars is about 2x faster than

[.data.frame, and get_vars<- is about 6-8x

faster than [<-.data.frame.

In addition to get_vars, collapse offers a set

of functions to efficiently select and replace data by data type:

num_vars, cat_vars (for categorical =

non-numeric columns), char_vars, fact_vars,

logi_vars and date_vars (for date and

date-time columns).

head(num_vars(wlddev), 2)

# year decade PCGDP LIFEEX GINI ODA POP

# 1 1960 1960 NA 32.446 NA 116769997 8996973

# 2 1961 1960 NA 32.962 NA 232080002 9169410

head(cat_vars(wlddev), 2)

# country iso3c date region income OECD

# 1 Afghanistan AFG 1961-01-01 South Asia Low income FALSE

# 2 Afghanistan AFG 1962-01-01 South Asia Low income FALSE

head(fact_vars(wlddev), 2)

# iso3c region income

# 1 AFG South Asia Low income

# 2 AFG South Asia Low income

# Replacing

fact_vars(wlddev) <- fact_vars(wlddev)2.2 Subsetting

fsubset is an enhanced version of

base::subset using C functions from the data.table

package for fast and subsetting operations. In contrast to

base::subset, fsubset allows multiple

comma-separated select arguments after the subset argument, and it also

preserves all attributes of subsetted columns:

# Returning only value-added data after 1990

fsubset(GGDC10S, Variable == "VA" & Year > 1990, Country, Year, AGR:GOV) %>% head(2)

# Country Year AGR MIN MAN PU CON WRT TRA FIRE GOV

# 1 BWA 1991 303.1157 2646.950 472.6488 160.6079 580.0876 806.7509 232.7884 432.6965 1073.263

# 2 BWA 1992 333.4364 2690.939 537.4274 178.4532 678.7320 725.2577 285.1403 517.2141 1234.012

# Same thing

fsubset(GGDC10S, Variable == "VA" & Year > 1990, -(Regioncode:Variable), -(OTH:SUM)) %>% head(2)

# Country Year AGR MIN MAN PU CON WRT TRA FIRE GOV

# 1 BWA 1991 303.1157 2646.950 472.6488 160.6079 580.0876 806.7509 232.7884 432.6965 1073.263

# 2 BWA 1992 333.4364 2690.939 537.4274 178.4532 678.7320 725.2577 285.1403 517.2141 1234.012It is also possible to use standard evaluation with

fsubset, but for these purposes the function

ss exists as a fast and more secure alternative to

[.data.frame:

ss(GGDC10S, 1:2, 6:16) # or fsubset(GGDC10S, 1:2, 6:16), but not recommended.

# AGR MIN MAN PU CON WRT TRA FIRE GOV OTH SUM

# 1 NA NA NA NA NA NA NA NA NA NA NA

# 2 NA NA NA NA NA NA NA NA NA NA NA

ss(GGDC10S, -(1:2), c("AGR","MIN")) %>% head(2)

# AGR MIN

# 1 NA NA

# 2 NA NAThanks to the data.table C code and optimized R code,

fsubset is very fast.

microbenchmark(base = subset(GGDC10S, Variable == "VA" & Year > 1990, AGR:SUM),

collapse = fsubset(GGDC10S, Variable == "VA" & Year > 1990, AGR:SUM))

# Unit: microseconds

# expr min lq mean median uq max neval

# base 150.839 156.5585 199.63105 160.3510 166.993 3778.191 100

# collapse 45.715 49.0975 51.55545 50.9015 52.357 82.861 100

microbenchmark(GGDC10S[1:10, 1:10], ss(GGDC10S, 1:10, 1:10))

# Unit: microseconds

# expr min lq mean median uq max neval

# GGDC10S[1:10, 1:10] 36.367 36.982 38.14599 37.515 38.294 76.219 100

# ss(GGDC10S, 1:10, 1:10) 1.886 2.050 2.30666 2.214 2.419 8.405 100like base::subset, fsubset is S3 generic

with methods for vectors, matrices and data frames. For certain classes

such as factors, fsubset.default also improves upon

[, but the largest improvements are with the data frame

method.

2.3 Reordering Rows and Columns

roworder is a fast analogue to

dplyr::arrange. The syntax is inspired by

data.table::setorder, so that negative variable names

indicate descending sort.

roworder(GGDC10S, -Variable, Country) %>% ss(1:2, 1:8)

# Country Regioncode Region Variable Year AGR MIN MAN

# 1 ARG LAM Latin America VA 1950 5.887857e-07 0 3.53443e-06

# 2 ARG LAM Latin America VA 1951 9.165327e-07 0 4.77277e-06

microbenchmark(collapse = collapse::roworder(GGDC10S, -Variable, Country),

dplyr = dplyr::arrange(GGDC10S, desc(Variable), Country))

# Unit: microseconds

# expr min lq mean median uq max neval

# collapse 113.406 152.151 176.7567 165.722 183.0855 538.330 100

# dplyr 1240.168 1299.372 1618.5869 1384.755 1507.8160 8350.552 100In contrast to data.table::setorder,

roworder creates a copy of the data frame (unless data are

already sorted). If this copy is not required,

data.table::setorder is faster. The function

roworderv is a standard evaluation analogue to

roworder:

# Same as above

roworderv(GGDC10S, c("Variable", "Country"), decreasing = c(TRUE, FALSE)) %>% ss(1:2, 1:8)

# Country Regioncode Region Variable Year AGR MIN MAN

# 1 ARG LAM Latin America VA 1950 5.887857e-07 0 3.53443e-06

# 2 ARG LAM Latin America VA 1951 9.165327e-07 0 4.77277e-06With roworderv, it is also possible to move or exchange

rows in a data frame:

# If length(neworder) < fnrow(data), the default (pos = "front") brings rows to the front

roworderv(GGDC10S, neworder = which(GGDC10S$Country == "GHA")) %>% ss(1:2, 1:8)

# Country Regioncode Region Variable Year AGR MIN MAN

# 1 GHA SSA Sub-saharan Africa VA 1960 0.03576160 0.005103683 0.01744687

# 2 GHA SSA Sub-saharan Africa VA 1961 0.03823049 0.005456030 0.01865136

# pos = "end" brings rows to the end

roworderv(GGDC10S, neworder = which(GGDC10S$Country == "BWA"), pos = "end") %>% ss(1:2, 1:8)

# Country Regioncode Region Variable Year AGR MIN MAN

# 1 ETH SSA Sub-saharan Africa VA 1960 NA NA NA

# 2 ETH SSA Sub-saharan Africa VA 1961 4495.614 11.86979 109.616

# pos = "exchange" arranges selected rows in the order they are passed, without affecting other rows

roworderv(GGDC10S, neworder = with(GGDC10S, c(which(Country == "GHA"),

which(Country == "BWA"))), pos = "exchange") %>% ss(1:2, 1:8)

# Country Regioncode Region Variable Year AGR MIN MAN

# 1 GHA SSA Sub-saharan Africa VA 1960 0.03576160 0.005103683 0.01744687

# 2 GHA SSA Sub-saharan Africa VA 1961 0.03823049 0.005456030 0.01865136Similarly, the pair colorder / colorderv

facilitates efficient reordering of columns in a data frame. These

functions not require a deep copy of the data and are very fast. To

reorder columns by reference, see also

data.table::setcolorder.

# The default is again pos = "front" which brings selected columns to the front / left

colorder(GGDC10S, Variable, Country, Year) %>% head(2)

# Variable Country Year Regioncode Region AGR MIN MAN PU CON WRT TRA FIRE GOV OTH SUM

# 1 VA BWA 1960 SSA Sub-saharan Africa NA NA NA NA NA NA NA NA NA NA NA

# 2 VA BWA 1961 SSA Sub-saharan Africa NA NA NA NA NA NA NA NA NA NA NA2.4 Transforming and Computing New Columns

ftransform is an improved version of

base::transform for data frames and lists.

ftransform can be used to compute new columns or modify and

delete existing columns, and always returns the entire data frame.

ftransform(GGDC10S, AGR_perc = AGR / SUM * 100, # Computing Agricultural percentage

Year = as.integer(Year), # Coercing Year to integer

AGR = NULL) %>% tail(2) # Deleting column AGR

# Country Regioncode Region Variable Year MIN MAN PU

# 5026 EGY MENA Middle East and North Africa EMP 2011 27.56394 2373.814 317.9979

# 5027 EGY MENA Middle East and North Africa EMP 2012 24.78083 2348.434 324.9332

# CON WRT TRA FIRE GOV OTH SUM AGR_perc

# 5026 2795.264 3020.236 2048.335 814.7403 5635.522 NA 22219.39 23.33961

# 5027 2931.196 3109.522 2065.004 832.4770 5735.623 NA 22532.56 22.90281

# Computing scalar results replicates them

ftransform(GGDC10S, MIN_mean = fmean(MIN), Intercept = 1) %>% tail(2)

# Country Regioncode Region Variable Year AGR MIN MAN

# 5026 EGY MENA Middle East and North Africa EMP 2011 5185.919 27.56394 2373.814

# 5027 EGY MENA Middle East and North Africa EMP 2012 5160.590 24.78083 2348.434

# PU CON WRT TRA FIRE GOV OTH SUM MIN_mean Intercept

# 5026 317.9979 2795.264 3020.236 2048.335 814.7403 5635.522 NA 22219.39 1867909 1

# 5027 324.9332 2931.196 3109.522 2065.004 832.4770 5735.623 NA 22532.56 1867909 1The modification ftransformv exists to transform

specific columns using a function:

# Apply the log to columns 6-16

GGDC10S %>% ftransformv(6:16, log) %>% tail(2)

# Country Regioncode Region Variable Year AGR MIN MAN

# 5026 EGY MENA Middle East and North Africa EMP 2011 8.553702 3.316508 7.772253

# 5027 EGY MENA Middle East and North Africa EMP 2012 8.548806 3.210070 7.761504

# PU CON WRT TRA FIRE GOV OTH SUM

# 5026 5.762045 7.935682 8.013090 7.624782 6.702869 8.636845 NA 10.00872

# 5027 5.783620 7.983166 8.042224 7.632888 6.724406 8.654452 NA 10.02272

# Convert data to percentage terms

GGDC10S %>% ftransformv(6:16, `*`, 100/SUM) %>% tail(2)

# Country Regioncode Region Variable Year AGR MIN MAN

# 5026 EGY MENA Middle East and North Africa EMP 2011 23.33961 0.1240535 10.68352

# 5027 EGY MENA Middle East and North Africa EMP 2012 22.90281 0.1099779 10.42240

# PU CON WRT TRA FIRE GOV OTH SUM

# 5026 1.431173 12.58029 13.59279 9.218680 3.666798 25.36308 NA 100

# 5027 1.442061 13.00871 13.80013 9.164534 3.694551 25.45482 NA 100

# Apply log to numeric columns

GGDC10S %>% ftransformv(is.numeric, log) %>% tail(2)

# Country Regioncode Region Variable Year AGR MIN MAN

# 5026 EGY MENA Middle East and North Africa EMP 7.606387 8.553702 3.316508 7.772253

# 5027 EGY MENA Middle East and North Africa EMP 7.606885 8.548806 3.210070 7.761504

# PU CON WRT TRA FIRE GOV OTH SUM

# 5026 5.762045 7.935682 8.013090 7.624782 6.702869 8.636845 NA 10.00872

# 5027 5.783620 7.983166 8.042224 7.632888 6.724406 8.654452 NA 10.02272Instead of passing comma-separated column = value

expressions, it is also possible to bulk-process data with

fransform by passing a single list of expressions (such as

a data frame). This is useful for more complex transformations involving

multiple steps:

# Same as above, but also replacing any generated infinite values with NA

GGDC10S %>% ftransform(num_vars(.) %>% lapply(log) %>% replace_Inf) %>% tail(2)

# Country Regioncode Region Variable Year AGR MIN MAN

# 5026 EGY MENA Middle East and North Africa EMP 7.606387 8.553702 3.316508 7.772253

# 5027 EGY MENA Middle East and North Africa EMP 7.606885 8.548806 3.210070 7.761504

# PU CON WRT TRA FIRE GOV OTH SUM

# 5026 5.762045 7.935682 8.013090 7.624782 6.702869 8.636845 NA 10.00872

# 5027 5.783620 7.983166 8.042224 7.632888 6.724406 8.654452 NA 10.02272This mode of usage toggles automatic column matching and replacement.

Non-matching columns are added to the data frame. Apart from to

ftransform, the function settransform(v) can

be used to change the input data frame by reference:

# Computing a new column and deleting some others by reference

settransform(GGDC10S, FIRE_MAN = FIRE / MAN,

Regioncode = NULL, Region = NULL)

tail(GGDC10S, 2)

# Country Variable Year AGR MIN MAN PU CON WRT TRA FIRE

# 5026 EGY EMP 2011 5185.919 27.56394 2373.814 317.9979 2795.264 3020.236 2048.335 814.7403

# 5027 EGY EMP 2012 5160.590 24.78083 2348.434 324.9332 2931.196 3109.522 2065.004 832.4770

# GOV OTH SUM FIRE_MAN

# 5026 5635.522 NA 22219.39 0.3432200

# 5027 5735.623 NA 22532.56 0.3544817

rm(GGDC10S)

# Bulk-processing the data into percentage terms

settransformv(GGDC10S, 6:16, `*`, 100/SUM)

tail(GGDC10S, 2)

# Country Regioncode Region Variable Year AGR MIN MAN

# 5026 EGY MENA Middle East and North Africa EMP 2011 23.33961 0.1240535 10.68352

# 5027 EGY MENA Middle East and North Africa EMP 2012 22.90281 0.1099779 10.42240

# PU CON WRT TRA FIRE GOV OTH SUM

# 5026 1.431173 12.58029 13.59279 9.218680 3.666798 25.36308 NA 100

# 5027 1.442061 13.00871 13.80013 9.164534 3.694551 25.45482 NA 100

# Same thing via replacement

ftransform(GGDC10S) <- fselect(GGDC10S, AGR:SUM) %>% lapply(`*`, 100/.$SUM)

# Or using double pipes

GGDC10S %<>% ftransformv(6:16, `*`, 100/SUM)

rm(GGDC10S)Another convenient addition is provided by the function

fcompute, which can be used to compute new columns in a

data frame environment and returns the computed columns in a new data

frame:

fcompute(GGDC10S, AGR_perc = AGR / SUM * 100, FIRE_MAN = FIRE / MAN) %>% tail(2)

# AGR_perc FIRE_MAN

# 5026 23.33961 0.3432200

# 5027 22.90281 0.3544817For more complex tasks see ?ftransform.

2.5 Adding and Binding Columns

For cases where multiple columns are computed and need to be added to

a data frame (regardless of whether names are duplicated or not),

collapse introduces the predicate add_vars.

Together with add_vars, the function add_stub

is useful to add a prefix (default) or postfix to computed variables

keeping the variable names unique:

# Efficient adding logged versions of some variables

add_vars(wlddev) <- get_vars(wlddev, 9:13) %>% lapply(log10) %>% add_stub("log10.")

head(wlddev, 2)

# country iso3c date year decade region income OECD PCGDP LIFEEX GINI ODA

# 1 Afghanistan AFG 1961-01-01 1960 1960 South Asia Low income FALSE NA 32.446 NA 116769997

# 2 Afghanistan AFG 1962-01-01 1961 1960 South Asia Low income FALSE NA 32.962 NA 232080002

# POP log10.PCGDP log10.LIFEEX log10.GINI log10.ODA log10.POP

# 1 8996973 NA 1.511161 NA 8.067331 6.954096

# 2 9169410 NA 1.518014 NA 8.365638 6.962341

rm(wlddev)By default add_vars appends a data frame towards the

(right) end, but it can also replace columns in front or at other

positions in the data frame:

add_vars(wlddev, "front") <- get_vars(wlddev, 9:13) %>% lapply(log10) %>% add_stub("log10.")

head(wlddev, 2)

# log10.PCGDP log10.LIFEEX log10.GINI log10.ODA log10.POP country iso3c date year decade

# 1 NA 1.511161 NA 8.067331 6.954096 Afghanistan AFG 1961-01-01 1960 1960

# 2 NA 1.518014 NA 8.365638 6.962341 Afghanistan AFG 1962-01-01 1961 1960

# region income OECD PCGDP LIFEEX GINI ODA POP

# 1 South Asia Low income FALSE NA 32.446 NA 116769997 8996973

# 2 South Asia Low income FALSE NA 32.962 NA 232080002 9169410

rm(wlddev)

add_vars(wlddev, c(10L,12L,14L,16L,18L)) <- get_vars(wlddev, 9:13) %>% lapply(log10) %>% add_stub("log10.")

head(wlddev, 2)

# country iso3c date year decade region income OECD PCGDP log10.PCGDP LIFEEX

# 1 Afghanistan AFG 1961-01-01 1960 1960 South Asia Low income FALSE NA NA 32.446

# 2 Afghanistan AFG 1962-01-01 1961 1960 South Asia Low income FALSE NA NA 32.962

# log10.LIFEEX GINI log10.GINI ODA log10.ODA POP log10.POP

# 1 1.511161 NA NA 116769997 8.067331 8996973 6.954096

# 2 1.518014 NA NA 232080002 8.365638 9169410 6.962341

rm(wlddev)add_vars can also be used without replacement, where it

serves as a more efficient version of cbind.data.frame,

with the difference that the data structure and attributes of the first

argument are preserved:

add_vars(wlddev, get_vars(wlddev, 9:13) %>% lapply(log) %>% add_stub("log."),

get_vars(wlddev, 9:13) %>% lapply(log10) %>% add_stub("log10.")) %>% head(2)

# country iso3c date year decade region income OECD PCGDP LIFEEX GINI ODA

# 1 Afghanistan AFG 1961-01-01 1960 1960 South Asia Low income FALSE NA 32.446 NA 116769997

# 2 Afghanistan AFG 1962-01-01 1961 1960 South Asia Low income FALSE NA 32.962 NA 232080002

# POP log.PCGDP log.LIFEEX log.GINI log.ODA log.POP log10.PCGDP log10.LIFEEX log10.GINI

# 1 8996973 NA 3.479577 NA 18.57572 16.01240 NA 1.511161 NA

# 2 9169410 NA 3.495355 NA 19.26259 16.03138 NA 1.518014 NA

# log10.ODA log10.POP

# 1 8.067331 6.954096

# 2 8.365638 6.962341

add_vars(wlddev, get_vars(wlddev, 9:13) %>% lapply(log) %>% add_stub("log."),

get_vars(wlddev, 9:13) %>% lapply(log10) %>% add_stub("log10."),

pos = c(10L,13L,16L,19L,22L,11L,14L,17L,20L,23L)) %>% head(2)

# country iso3c date year decade region income OECD PCGDP log.PCGDP log10.PCGDP

# 1 Afghanistan AFG 1961-01-01 1960 1960 South Asia Low income FALSE NA NA NA

# 2 Afghanistan AFG 1962-01-01 1961 1960 South Asia Low income FALSE NA NA NA

# LIFEEX log.LIFEEX log10.LIFEEX GINI log.GINI log10.GINI ODA log.ODA log10.ODA POP

# 1 32.446 3.479577 1.511161 NA NA NA 116769997 18.57572 8.067331 8996973

# 2 32.962 3.495355 1.518014 NA NA NA 232080002 19.26259 8.365638 9169410

# log.POP log10.POP

# 1 16.01240 6.954096

# 2 16.03138 6.962341

identical(cbind(wlddev, wlddev), add_vars(wlddev, wlddev))

# [1] TRUE

microbenchmark(cbind(wlddev, wlddev), add_vars(wlddev, wlddev))

# Unit: microseconds

# expr min lq mean median uq max neval

# cbind(wlddev, wlddev) 13.694 14.1040 15.72760 14.391 14.7600 57.072 100

# add_vars(wlddev, wlddev) 3.280 3.6285 4.13567 3.813 4.0385 19.352 1002.6 Renaming Columns

frename is a fast substitute for

dplyr::rename:

frename(GGDC10S, AGR = Agriculture, MIN = Mining) %>% head(2)

# Country Regioncode Region Variable Year Agriculture Mining MAN PU CON WRT TRA FIRE

# 1 BWA SSA Sub-saharan Africa VA 1960 NA NA NA NA NA NA NA NA

# 2 BWA SSA Sub-saharan Africa VA 1961 NA NA NA NA NA NA NA NA

# GOV OTH SUM

# 1 NA NA NA

# 2 NA NA NA

frename(GGDC10S, tolower) %>% head(2)

# country regioncode region variable year agr min man pu con wrt tra fire gov oth sum

# 1 BWA SSA Sub-saharan Africa VA 1960 NA NA NA NA NA NA NA NA NA NA NA

# 2 BWA SSA Sub-saharan Africa VA 1961 NA NA NA NA NA NA NA NA NA NA NA

frename(GGDC10S, tolower, cols = .c(AGR, MIN)) %>% head(2)

# Country Regioncode Region Variable Year agr min MAN PU CON WRT TRA FIRE GOV OTH SUM

# 1 BWA SSA Sub-saharan Africa VA 1960 NA NA NA NA NA NA NA NA NA NA NA

# 2 BWA SSA Sub-saharan Africa VA 1961 NA NA NA NA NA NA NA NA NA NA NAThe function setrename does this by reference:

setrename(GGDC10S, AGR = Agriculture, MIN = Mining)

head(GGDC10S, 2)

# Country Regioncode Region Variable Year Agriculture Mining MAN PU CON WRT TRA FIRE

# 1 BWA SSA Sub-saharan Africa VA 1960 NA NA NA NA NA NA NA NA

# 2 BWA SSA Sub-saharan Africa VA 1961 NA NA NA NA NA NA NA NA

# GOV OTH SUM

# 1 NA NA NA

# 2 NA NA NA

setrename(GGDC10S, Agriculture = AGR, Mining = MIN)

rm(GGDC10S)Both functions are not limited to data frames but can be applied to any R object with a ‘names’ attribute.

2.7 Using Shortcuts

The most frequently required among the functions introduced above can

be abbreviated as follows: fselect -> slt,

fsubset -> sbt, ftransform(v) -> tfm(v),

settransform(v) -> settfm(v),

get_vars -> gv, num_vars -> nv,

add_vars -> av. This was done to make it possible to

write faster and more parsimonious code, but is recommended only for

personally kept scripts. A lazy person may also decide to code

everything using shortcuts and then do ctrl+F replacement with the long

names on the finished script.

2.8 Missing Values / Rows

The function na_omit is a much faster alternative to

stats::na.omit for vectors, matrices and data frames. By

default the ‘na.action’ attribute containing the removed cases is

omitted, but it can be added with the option

na.attr = TRUE. Like fsubset,

na_omit preserves all column attributes as well as

attributes of the data frame itself.

microbenchmark(na_omit(wlddev, na.attr = TRUE), na.omit(wlddev))

# Unit: microseconds

# expr min lq mean median uq max neval

# na_omit(wlddev, na.attr = TRUE) 60.393 69.208 84.8126 79.9910 88.683 419.881 100

# na.omit(wlddev) 745.790 856.449 1721.5457 940.6015 1005.177 56344.414 100Another added feature is the removal of cases missing on certain columns only:

na_omit(wlddev, cols = .c(PCGDP, LIFEEX)) %>% head(2)

# country iso3c date year decade region income OECD PCGDP LIFEEX GINI

# 1 Afghanistan AFG 2003-01-01 2002 2000 South Asia Low income FALSE 330.3036 56.784 NA

# 2 Afghanistan AFG 2004-01-01 2003 2000 South Asia Low income FALSE 343.0809 57.271 NA

# ODA POP

# 1 1790479980 22600770

# 2 1972890015 23680871

# only removing missing data from numeric columns -> same and slightly faster than na_omit(wlddev)

na_omit(wlddev, cols = is.numeric) %>% head(2)

# country iso3c date year decade region income OECD PCGDP

# 1 Albania ALB 1997-01-01 1996 1990 Europe & Central Asia Upper middle income FALSE 1869.866

# 2 Albania ALB 2003-01-01 2002 2000 Europe & Central Asia Upper middle income FALSE 2572.721

# LIFEEX GINI ODA POP

# 1 72.495 27.0 294089996 3168033

# 2 74.579 31.7 453309998 3051010For atomic vectors the function na_rm also exists which

is 2x faster than x[!is.na(x)]. Both na_omit

and na_rm return their argument if no missing cases were

found.

The existence of missing cases can be checked using

missing_cases, which is also considerably faster than

complete.cases for data frames.

There is also a function na_insert to randomly insert

missing values into vectors, matrices and data frames. The default is

na_insert(X, prop = 0.1) so that 10% of values are randomly

set to missing.

Finally, a function allNA provides the much needed

opposite of anyNA for atomic vectors.

2.9 Unique Values / Rows

Similar to na_omit, the function funique is

a much faster alternative to base::unique for atomic

vectors and data frames. Like most collapse functions it also

seeks to preserve attributes.

funique(GGDC10S$Variable) # Unique values in order of appearance

# [1] "VA" "EMP"

# attr(,"label")

# [1] "Variable"

# attr(,"format.stata")

# [1] "%9s"

funique(GGDC10S$Variable, sort = TRUE) # Sorted unique values

# [1] "EMP" "VA"

# attr(,"label")

# [1] "Variable"

# attr(,"format.stata")

# [1] "%9s"

# If all values/rows are unique, the original data is returned (no copy)

identical(funique(GGDC10S), GGDC10S)

# [1] TRUE

# Can remove duplicate rows by a subset of columns

funique(GGDC10S, cols = .c(Country, Variable)) %>% ss(1:2, 1:8)

# Country Regioncode Region Variable Year AGR MIN MAN

# 1 BWA SSA Sub-saharan Africa VA 1960 NA NA NA

# 2 BWA SSA Sub-saharan Africa EMP 1960 NA NA NA

funique(GGDC10S, cols = .c(Country, Variable), sort = TRUE) %>% ss(1:2, 1:8)

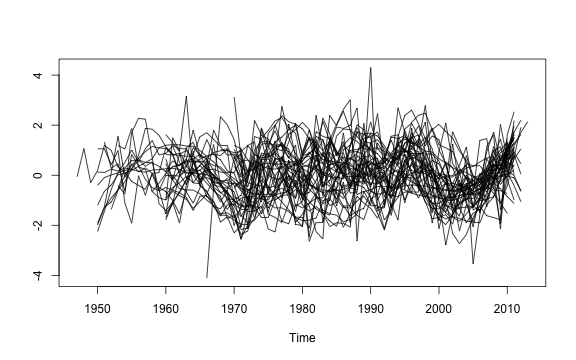

# Country Regioncode Region Variable Year AGR MIN MAN